DCC Roadshow in Cardiff

Posted by Marieke Guy on 15th December 2011

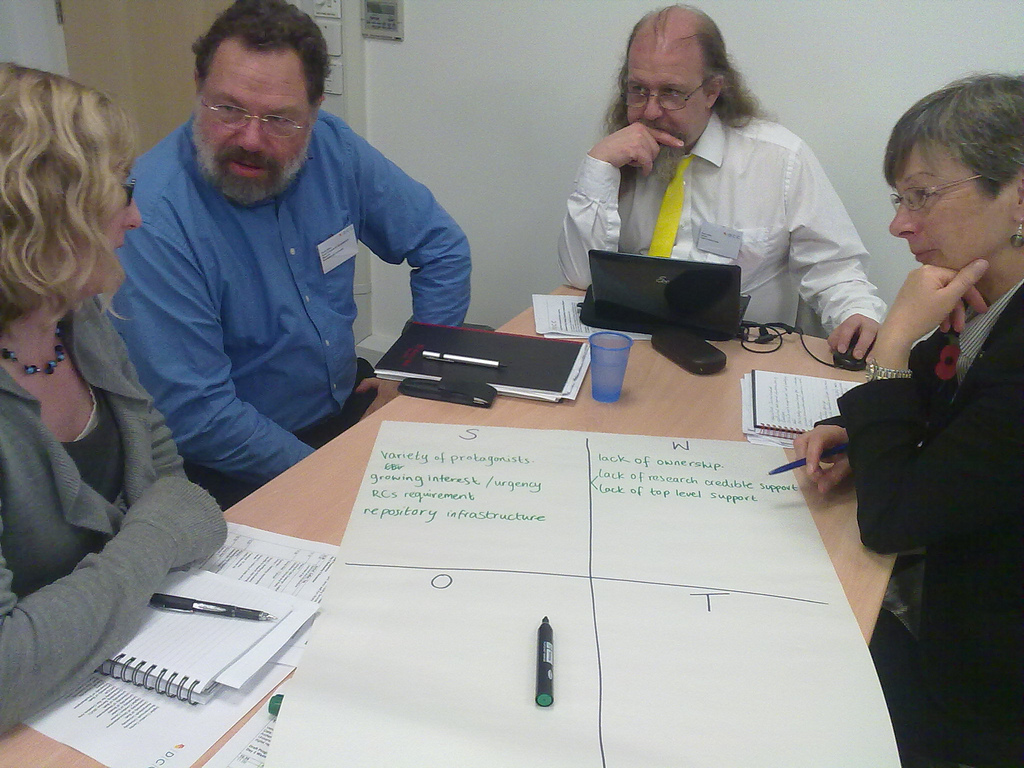

Snow, sleet, hailstones, rain and sunshine! The Cardiff weather couldn’t make up its mind, but the Digital Curation Centre (DCC) roadshow carried on regardless. Although I have attended various days of the travelling roadshow (Bath and Cambridge) I’ve never actually managed to catch a day one. The opening day is an opportunity to hear an overview of the research data management landscape and is also the day on which local case studies make it onto the agenda, so I was looking forward to it.

Welcome: Janet Peters, Cardiff University

Janet Peters, Director of University Libraries and University Librarian for Cardiff University, opened the day by saying how keen she was to have the roadshow take place locally; feeling it to be very timely given current research data management (RDM) work in Cardiff. Janet explained that her attendance of the Bath roadshow had kick-started Cardiff’s work in this area. Cardiff have recently revitalised their digital preservation group and have been providing guidance and assisting departments with implementing changes to their RDM processes – more on this later. They have also recently rolled out an institutional repository, though it doesn’t cover data sets (at the moment).

The Changing Data Landscape: Liz Lyon, UKOLN

Liz set the scene for the day by outlining the current data landscape. She began by introducing the new BIS report entitled Innovation and Research Strategy for Growth which expresses the government’s support for open data and introduces the Open Data Institute (ODI). Only last week David Cameron made the suggestion that “every NHS patient should be a “research patient” with their medical details “opened up” to private healthcare firms”. Openness and access to data are two of the biggest challenges of the moment and have stimulated much debate. Liz gave the controversial example of one tobacco companies FOI request to the University of Stirling for information relating to a survey on the smoking habits of teenagers. She explained that proposed amendment to FOI data will allow institutions to ask for exemption to FOI requests when research is ongoing. It’s often the case that researchers don’t want to share data and there have been instances when governments have placed restrictions on data use(e.g. the bring your genes to cal project. Liz shared some examples of more positive cases of when research is shared e.g. Alzheimers research, 1000 Genomes Project, Personal Genome Project, openSNP. She also offered some citizen science examples: BBC nature, project Noah http://www.projectnoah.org/, Galaxy Zoo, Patients Participate, BBC Lab. The Panton Principles are a recent set of guidelines that offer possible approaches: Open knowledge, open data, open content and open service. To some degree the key to all of this is knowing about data licensing and the DCC offer advice in this area.

Liz then moved on to what is often seen as the biggest challenge of all: the sheer volume of data now created e.g. large hydron collider. In the genomics area there are lots of shocking statistics on the growth of data and the implications of this. Another new report phg foundation: Next steps in the sequence gives the implications of this data deluge for the NHS. The text the Forth paradigm highlights data intensive research as being the next step in research. The DCC are working with Microsoft Research Connections to create a community capability model for data intensive research

It is apparent that big data is being lost, but so is small data (like excel spreadsheets) and part of the challenge is working out how scientists can deal with the longtail. What is framed as gold standard data is when you can fully replicate the code and the data, reproducible research is the second best approach. Data storage needs to be scalable, cost-effective, secure, robust and resilient, have a low entry barrier, have ease of use. Liz also also asked us to consider the role of cloud services, giving Dataflow http://www.dataflow.ox.ac.uk/, VIDaaS, BRISSKit, lab notebook as 4 JISC projects to follow in this area.

Liz then talked a little about policy, giving research council examples. The most relevant is the fairly demanding EPSRC expectations that have serious implications for HEIs: Institutions must provide a RDM roadmap by 1st May 2012 and must be compliant with these expectations by 1st May 2015. At the University of Bath, where Liz is based, there is a new project called research360@Bath and they have a particular emphasis on faculty-industry focus. There will also be a new data scientist role based at UKOLN. A full list of funders and their requirements is available from the DCC Web site.

Resources are available and the Incremental project http://www.lib.cam.ac.uk/preservation/incremental/ back in 2010 found that many people felt that institutional policies were needed in the RDM area. Edinburgh have developed an aspirational data management policy. The DCC have pulled together exemplars of data policy information http://www.dcc.ac.uk/resources/policy-and-legal/institutional-data-policies, ANDS also have a page on local policy.

It is also important to consider how you incentivise data management? There is quite a lot of current work on impact, data citation and DOIs. Some example projects: Total Impact http://total-impact.org/ and SageCite.

And what about the cost? Useful resources include the Charles Beagrie report on Keeping Research data safe http://www.beagrie.com/jisc.php, Neil Begrie has done some work into helping people articulate the benefits through use of a benefits framework tool.

In conclusion Liz asked delegates to think about the gaps in their institution.

Digital Data Management Pilot Projects: Sarah Philips, Cardiff University

Sarah explained how at Cardiff the University had retention requirements for quite a lot of corporate records and permanent records. They also have requirements for some of their research data for 5 -30 years. The University has set up three pilot projects in response to feedback from a digital preservation policy in the cultural area, in the school of Biosciences using genomic data and in the school of history and archaeology. Work in the school of history and archaeology department is now coming to a close and this is the area Sarah would concentrate on.

Three projects within the department were used as a test bed. The South Romanian Archaelogical Project (SRAP) at the University had collected excavation data and the team have been keen to make the data available. The Magura Past and Present Project had artists coming in and creating art; because the project was an engagement project it was required that the outputs be available, though not necessarily the data. The final project was on auditory archaeology. All three projects were run by Doctor Steve Mills.

Records management audits were carried out through face-to-face interviews with staff using the DCCs Data Asset Framework. Questions included: what records and data are held? How are the records and data managed and stored? What are the member of staffs requirements? A data asset register was created that dealt with lots of IP issues, ownership issues etc. Once this data was collected potential risks were identified e.g. Dr Mills had been storing data on any other hard-drives available but he didn’t have a systematic approach to this, there was some metadata available but file structure was an issue, proprietary formats were used and there are no file naming procedures in place. Dr Mills was keen to make the data accessible so the RDM team have been looking at depositing it with the Archaeology Data Service, if this solution isn’t feasible they will have to use an institutional solution.

High Performance Computing and the Challenges of Data-intensive Research: Martyn Guest, Cardiff University

Martyn started off by giving an introduction to advanced research computing

at Cardiff (ARCAA) which was established in 2008. Chemistry and physics have been the biggest users of high performance computing so far, but the data problem is relatively new and has really arisen since the explosion of data use by the medical and humanities schools.

He sees the challenges as being technical (quality performance, metadata, security, ownership, access, location and longevity), political (centralisation vs departmental), governance, ownership) and personal, financial (sustainability), legal & ethical (DP, FOI). Martyn showed us their data intensive supercomputer (‘Gordon’) and a lot of big numbers (for file sizes) were banded about! Gordon runs large-memory applications (supermode) – 512 cores, 2 TB of RAM, and 9.6 TB of flash. It has been the case that NERC has spent a lot of time moving data leaving less effort for analysing the data.

Martyn shared a couple of case studies: Positron Emission Tomography Imaging (PET) data where the biggest issues were that the data was raw, researchers weren’t interested in patient identifiable data but want image while clinicians wanted PID and image. He talked about sequencing data , which is now relatively easy, the hard bit is using biometrics on the data. As Martyn explained it now costs more to a analyse a genome than to sequence it and the big issue is sharing that data. Martin joked that the “best way to share data is by Fedex”, many agreed that this may often be the case! The case studies showed that in HPC it’s often a computational problem. HPC Wales has three various components to it including awareness building around HPC and the creation of a welsh network that can be accessed from anywhere and globally distributed.

Martyn concluded that the main issues are around how to do the computing efficiently while the archiving issues continue to be secondary.

Research Data Storage at the University of Bristol: Caroline Gardiner, University of Bristol

Caroline Gardiner explained that at the University of Bristol her team had originally carried out a lot of high performance computing but were increasingly storing research data. She noted that the arts subjects are increasingly creating huge data sets.

Caroline admitted to collecting horror stories of lost data and using this as a way to leverage support. The Bristol solution has been BluePeta which has been created using £2m funding and is a petascale facility. This facility is purely for research data at the moment, not learning and teaching data, thought is an expandable facility.

Caroline explained that their success in this area came from many directions. Bristol already had a management structure in place for HPC and for research data storage, they had access to the strategy people and those who held the purse strings. Bristol also have a research data storage and management board, there continues to be buy in from academics.

The process in place is that the data steward (usually principal investigator PI) applies and can register one or more projects. There is then academic peer review and storage policies applied. There is a cost model in place, the data steward gets 5TB free and then have to pay £400 per TB for annum disk storage. They are encouraging PIs to factor in these costs when writing their research grant applications. The facility is more for data that needs to be stored over the long term rather than active data.

Bristol are also exploring options for offsite storage and will also be looking at an annual asset holding review. They are also looking at preparing an EPSRC roadmap and starting to address wider issues of data management.

In answer to a question Caroline explained that they had made cost analysis against 3rd party solutions but when using the big players (like Google and Amazon) the cost of moving the data was the issue. There was some discussion on peer-to-peer storage but delegates were concerned that it would kill the network.

Data.bris: Stephen Gray, University of Bristol

Following on from Caroline’s talk Stephen Grey talked about what was happening on the ground through data.bris. Stephen explained that the drivers for the project were meeting the funder requirements (not just EPSRC), also meeting the publisher requirements and using research data in the REF and to increase successful applications. Bristol have agreed a digital support role alongside the data.bris project, though this ia all initially limited to the department of arts and humanities.

Following on from Caroline’s talk Stephen Grey talked about what was happening on the ground through data.bris. Stephen explained that the drivers for the project were meeting the funder requirements (not just EPSRC), also meeting the publisher requirements and using research data in the REF and to increase successful applications. Bristol have agreed a digital support role alongside the data.bris project, though this ia all initially limited to the department of arts and humanities.

The team will be initially meeting with researchers and using the DMPOnline tool to establish funder requirements and ethical, IPR and metadata issues. After the planning there will be the research application and then hopefully research funding. The projects will then have access to BluePeta storage. The curation is planned to happen at the end of the project and high valued data identified for curation. Minimal metadata should be added at this stage, though there is a balancing act here between resourcing and how much metadata is added. Bristol have a PURE research management system and data.bris repository where they can check the data and carry out metadata extraction and assign DOIs. They will then promote and monitor data use

In the future the team also want to look into external data centres use. A theme running through the project is ongoing training and guidance and advocacy and policy. Training will need to go to all staff including IT support and academic staff and they are hoping for some mandatory level of training.

Bristol are also planning on using the DCC’s CARDIO and DAF tools

In the Q&A session delegates were interested in how Bristol had received som much top-down support for this work. It was explained that the pro VC for research ws a scientist and understood the issues. While there was support for research data it was felt that there could do with being more support for outputs.

Herding Cats – Research Publishing at Swansea University: Alexander Roberts, Swansea University

Alexander Roberts started off his presentation by saying that Swansea wants it all: all data, big data, notes scribbled on the back of fag packets, ideas, searchable and mineable data. Not only this but Swansea would like it all in one place, currently they have a lot of departmental data bases and various file formats in use. Swansea looked at couple of different systems including PURE but wanted an in house content management system, they also inherited a DSpace repository. They wanted this system to integrate with their TerminalFour Web CMS, with their DSpace system Cronfa and to give RSS feeds for staff research profiles, give Twitter feeds, Facebook updates etc. There was a consultation process that allowed lots of relationships to be formed and the end users to be involved. People were concerned that if they passed over their data they wouldn’t be able to get it back. A schema was created for the system. They started off using Sharepoint and were clear that they wanted everything in a usable format for the REF. The end result was built from the ground up: a form-based research information system that allowed researchers to add their outputs as easily as possible. It is a simple form based application that integrates with the HR database and features DOI resolving, MathML. The ingest formats are RSS, XML, Excel, Acess and others. It provides Open Data Protocol (oData) endpoint which provides feeds to the Web CMS and personal RSS feeds.

Alexander ended by saying that in 2012 they would like to implement automatic updates to DSPACE via SWORD and a searchable live directory of research outputs. They also want to have enhanced data visualisation tools for adminstrators. Mobile consideration is also a high priority as Swansea have a mobile first policy.

Delivering an Integrated Research Management & Administration System: Simon Foster, University of Exeter

A Research Management and Administration System (RMAS) is more about managing data about projects but can also deal with research data. The Exeter project has been funded under the UMF, funded by HEFCE through JISC and is part of the HEFCE bigger vision of cloud computing and join up of systems. HE USB is being used: a test cloud environment from Eduserv. Simon Foster described how the project had started with a feasibility study which looked at whether there was demand for a cradle to grave RMAS system, 29 higher education institutions expressed interest. The project was funded and it was worked out that 29 HEIs phased in over ten years could save £25 million. The single supplier approach was avoided after concerns that it could kill all others in the market. The steering group looked at the processes involved and these were fed into a user requirement document. It was necessary that it was cloud enabled and were compliant with CERIF data exchange. Current possible systems include Pure, Avida etc. Specific modules were suggested. The end result will be a framework in place that will allow institutions to put out a mini-tender for RMAS systems asking specific institution related questions. Institutions should be able to do this in 4 weeks rather than 6 months.

The next steps for the project are proof of concept deliverables using CERIF data standards and use of externally hosted services. They also want to work with other services, such as OSS Watch.

There followed a panel session which included questions around the cost implications of carrying out this work. One suggestion was to consider the cost of failed bids due to lack of data management plans.

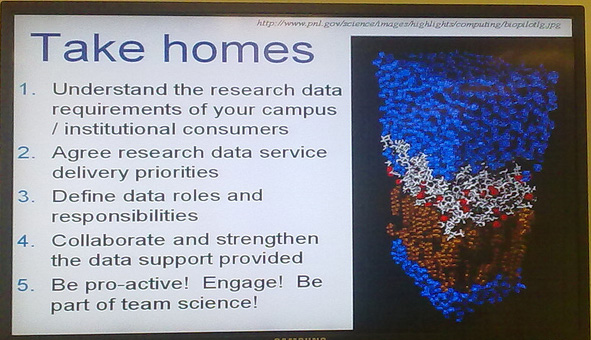

What can the DCC do for You?: Michael Day, UKOLN

Michael Day finished off the day with an overview of the DCC offerings and who they are aimed at (from researchers to librarians, from funders to IT services staff). He reiterated that part of RDM is bringing together different people from disparate areas and clarifying their role in the RDM process. The DCC tools include CARDIO, DAF, DMP Online, DRAMBORA. Some of the services include policy development, training, costing, workflow assessment etc. DCC resources are available from the DCC Website.

Conclusions

So after a day talking about data deluge while listening to a deluge of the more familiar sort (loud hail and rain) we were left with a lot to think about.

One interesting insight for me were that while the data deluge had come originally from certain science areas (astronomy, physics etc.) now more and more subjects (including arts and social sciences) are creating big data sets. One possible approach, advocated by a number of the day’s presenters, is to use HPC as a starting point from which to jolt start research data management. However there will continue to be a lot of data ‘outside of the circle’. As ever, join up is very important. Getting all the stakeholders together is essential, and that is something the DCC roadshows do very well. All presentations from the day are available form the DCC Web site.

The next roadshow will take place from 7 – 8 February 2012 in Loughborough. It is free to attend.

Tags: irg

Posted in Conference, dcc, irg | Comments Off